CAPABILITY

Traditional Approach

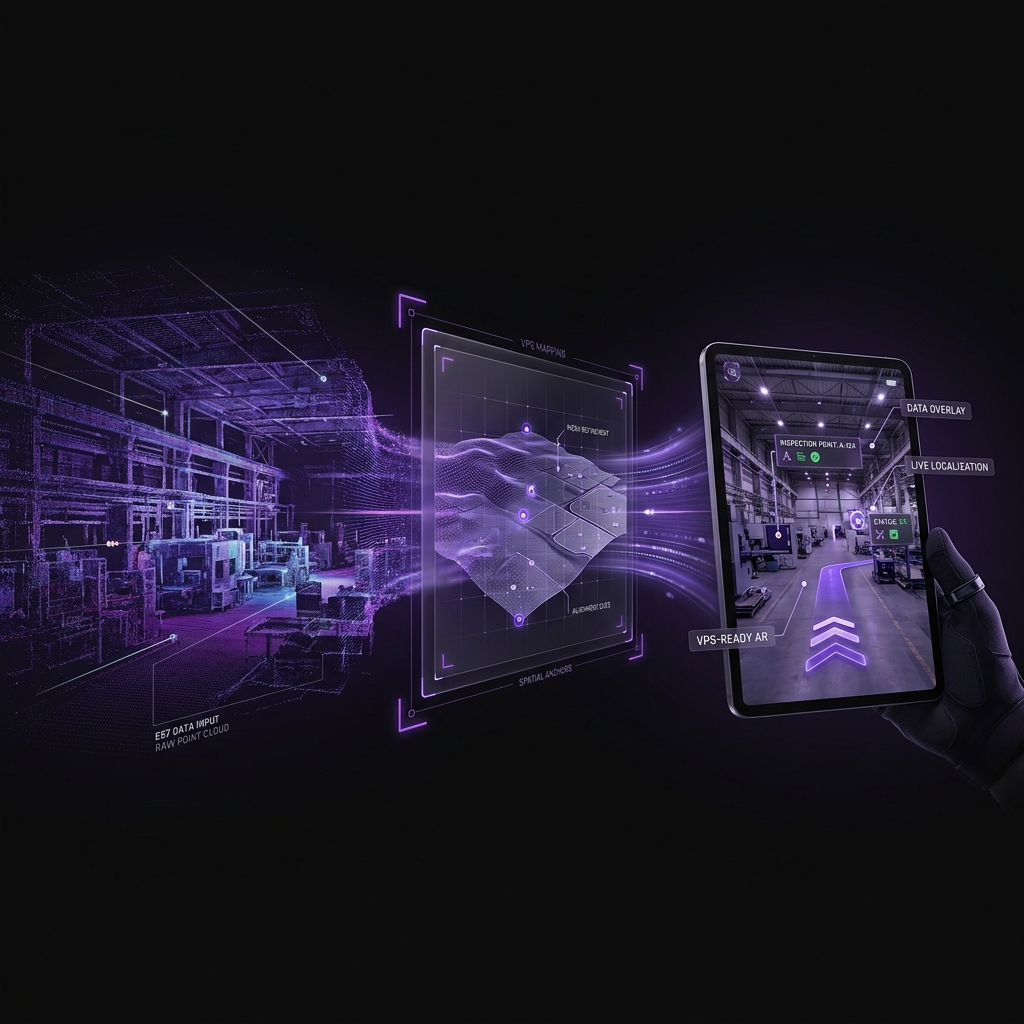

MultiSet E57 Ingest

Scan input

Platform-specific capture only

Any E57: Matterport, Leica, NavVis, Faro, Xgrids

Time to first AR map

Hours to days (rescan + process)

<1 hour from existing E57 upload

Coordinate preservation

New coordinate system per rescan

Preserves original scan coordinates

Hardware lock-in

Tied to one scanner ecosystem

Open E57 standard. Switch anytime.

Multi-floor coverage

Manual stitching or separate projects

Native MapSet stitching across floors

Deployment

Cloud-only or app-embedded

Cloud / VPC / on-prem / on-device