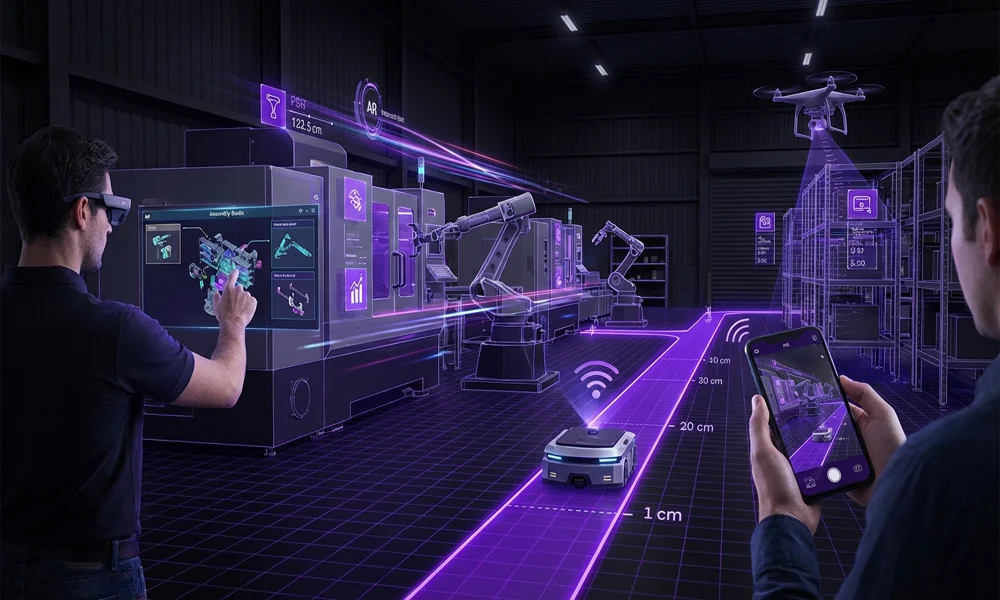

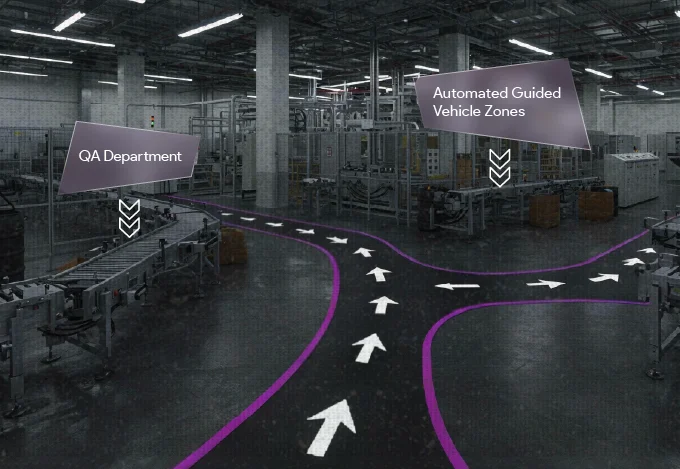

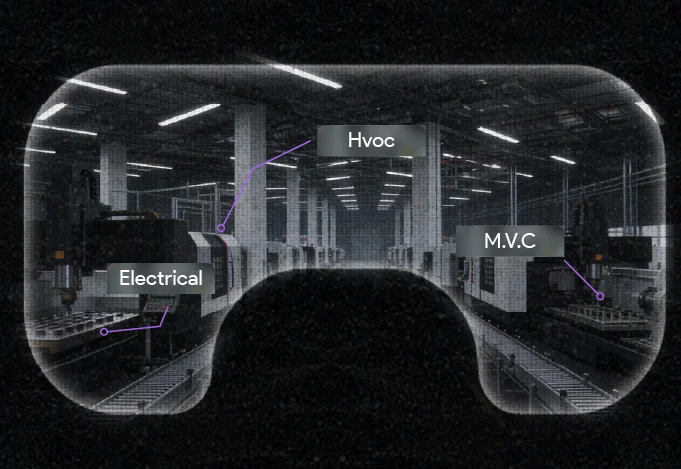

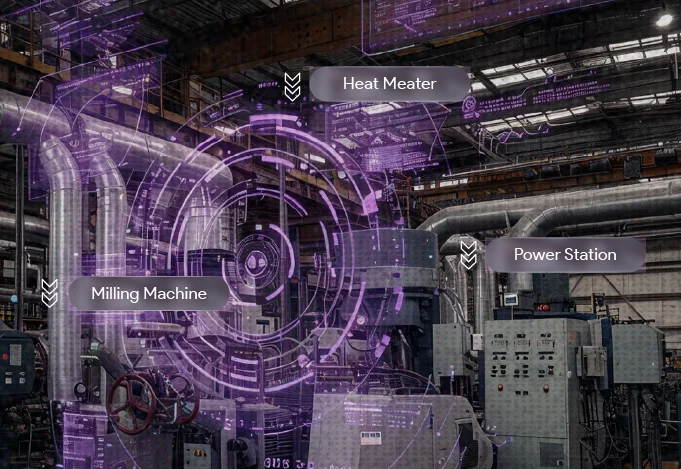

Instead of installing thousands of physical sensors to track assets and unreliable GPS in dense/indoor areas, MultiSet turns the camera of any standard device (phone, tablet, or robot) into a precision sensor. It compares what the camera sees against a digital map of your facility to provide centimeter-level tracking without any new infrastructure.