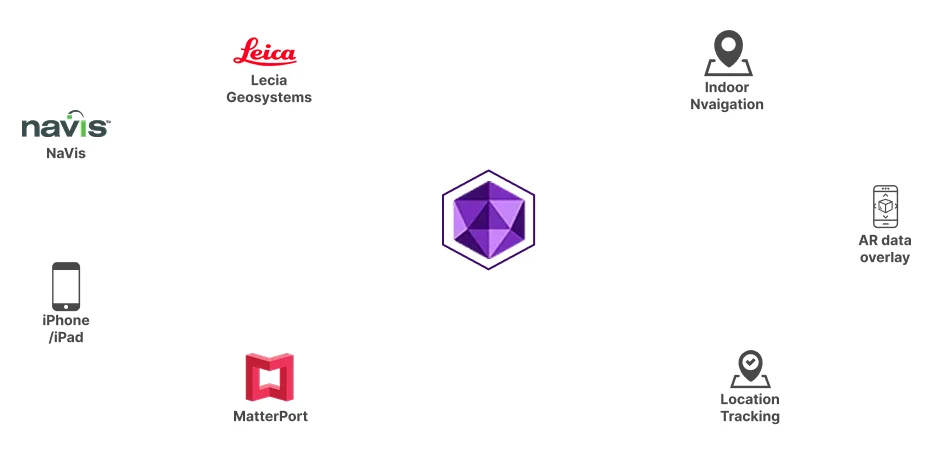

MultiSet analyzes 3D scans of your building to create a "fingerprint" of the environment. This digital fingerprint allows devices to instantly recognize where they are by comparing what their camera sees to this pre-built map—enabling accurate navigation without GPS